#LancetGate - Doing something about scientific fraud

Post-COVID era cements the proof of global fraud and the need to destroy it

What is abjectly clear is that scientific fraud is widespread, orchestrated, funded, politicised and profitable in the extreme. It is also clear that scientific fraud is old.

COVID gene therapy science (and likely some of the COVID viral science) is riddled with fraud that is traceable straight to the direct beneficiaries of the fraud including entities like the pharma companies, Bill and Melinda Gates Foundation, regulatory bodies, NIH, NIAID, and by extension Anthony Fauci etc. Research and development, publication and funding are locked into an unvirtuous cycle which drives power, status, progression, profits and prominence within corporate and institutional science. It is possible to literally buy into existence scientific or medical dogma. Glyphosate was scientific dogma. Statins are medical dogma. So are many other care protocol pathways that effectively drive towards the use of a specific drug for the vast majority of patients who meet simplistic diagnostic gates. Such pathways are created by big pharma in conjunction with healthcare providers or authorities who are unaccountable and unidentifiable.

Outside of COVID, glyphosate is a proven, global fraud in which Monsanto funded and controlled the research output into the compound and then, using journals and other marketing outlets, claimed that the compound was completely non-toxic to human health and essentially harmless to the environment due to its natural degradation in soil, irrespective of the fact that by its nature, the most powerful herbicide known to man worked by virtue of doing harm to organisms. This has been proven by the tens of thousands of court cases ruled in favour of plaintiffs who have contracted cancer and been medically harmed through their exposure to or consumption of the chemical. The scale of this fraud is not reflected in the glyphosate cases, which should account for the consumption of the compound by the global populace.

Professor David Healy MD FRCPsych1 has been writing books about aspects of corruption in the field of psychiatry since his 2004 book, Let them eat Prozac. In 2012 he authored Pharmageddon about how the arrangements put in place by the FDA to prevent a repeat of the tragedy of thalidomide have been systematically subverted, circumvented and gamed:

The means to protect ourselves from a recurrence of the thalidomide disaster have been our undoing. Product patents gave an incentive to pharmaceutical companies to produce blockbuster drugs — drugs that were so valuable to a company and its survival that the incentives to breach regulations and hide any safety data that might be inconvenient for the company are so huge that entire trials are hidden, that almost all trials are ghostwritten to ensure the data looks right, and no one, not even the FDA, has access to the data.

Healy speaks about Pharmageddon here.

Dr. David Wiseman PhD MRPharmS has, in a similar vein, been involved in identifying and calling out fraud in medical science for over 25 years. Recently, Wiseman has conducted detailed assessments of FDA authorisations of COVID gene therapies and molnupiravir, in which he highlighted major concerns that undermine the granted EUA. Here is a 35 minute presentation given to the World Council for Health on assessment and authorisation of COVID gene therapies and various medical treatments. Here is a long list of other presentations from Wiseman on COVID.

Both Healy and Wiseman repeatedly point out that scientific fraud is partly enabled by the total corruption of the peer review process, particularly when it comes to the review of corporate data and findings. In short, all data is proprietary and therefore private and controlled by the owner. This means that peer review of trial or research data is, by design first and data access second, locked out of really assessing the truth of a given research paper. Peer review is not by design concerned with verifying if raw data is real, true and accurate. This begs the obvious question of how any genuine and meaningful peer review is conducted in any journal if the data is unavailable. In short, it isn’t. What this requires is collusion between the authors of a paper, the owners of the data, and the journal(s). If such research is used for regulatory approval, the regulator would by default collude in the process. By the time you read a paper published in a peer review journal, you assume that it is published there because it has been peer reviewed and will be subject to further peer review but how do you know either one of those things has occurred? You simply do not. You take it on faith and make assumptions about what “peer review” means and involves. The vast majority of people have literally no idea of either.

Clues to the above phenomena exist inside COVID regulatory documentation. EMA, FDA and MHRA regulatory docs for COVID gene therapies all state, in various language, that various regulatory concerns were “discussed” and answered by the pharma company, to the satisfaction of the regulator. There is no provision of detailed answers or data. Why? Because the data is proprietary and private to the company, and the regulators and governments place corporate interests and their protection of their property over and above any public health and safety issue. This has been stated repeatedly by western governments in response to FOI requests for data relating to clinical trials. Regulators never investigated the truthfulness, accuracy or veracity of proprietary clinical and pre-clinical raw manufacturer data for COVID gene therapies. They simply looked at what data and narratives the manufacturers chose to present them. The regulators did not attempt to reproduce any manufacturer findings anywhere, directly indirectly or by proxy.

Lancet & NEJM Fraud

British medical journal, The Lancet, published a paper claiming to be based upon primary data around the use of hydroxychloroquine in treatment COVID. That paper effectively ended HCQ research and seriously impacted research into other existing drugs. It was based on totally falsified data and circumstances which are utterly laughable. The fraud was not detected by the Lancet’s peer review process, but rather by a spectrum of external observers. The Lancet then retracted the paper, but the damage to HCQ and, by association, other existing, generic and repurposed drugs as COVID treatments was done.

It is a common view that The Lancet and the New England Journal of Medicine played a wilful role in serving establishment and corporate agendas by publishing a fraudulent paper that undermined Trump and radically bolstered the COVID big pharma gene therapy narrative by deliberately destroying research into existing drugs, despite the fact that Fauci himself had published stating HCQ was a good compound for treating respiratory illness such as SARS.

Richard Horton, editor of The Lancet, also took to TV at the start of the “pandemic” to lobby the public for lockdown and draconian medical and social measures. Remember that such measures were based on literally zero scientific evidence on any historical basis, so Horton had nothing to back his demands and assertions. He has been proven to be nothing more than a charlatan with regards to COVID, yet he and The Lancet have faced zero repercussions. The NEJM also engaged in the publication (and simultaneous retraction of) the Surgisphere HCQ fraud, which proves that both the world’s “leading” medical journals have zero ability to detect even egregious fraud using their peer review processes prior to publication, or they committed fraud. Neither could defend their publishing when challenged externally by people who are less resourced than either journal, resulting in the simultaneous retraction. In an uncorrupted world, both of these journals would have been hauled into court and the court of public opinion. In the real and deeply corrupt world, these agents of the corporatocracy continue to do business as usual.

It is up to us to change that.

Scientist James Heathers wrote in the Guardian about ways that beneficial changes could be made, but he still steered clear of data access and the fact that peer review does not seek to reproduce scientific experimental findings.

The immediate solution to this problem of extreme opacity, which allows flawed papers to hide in plain sight, has been advocated for years: require more transparency, mandate more scrutiny. Prioritise publishing papers which present data and analytical code alongside a manuscript. Re-analyse papers for their accuracy before publication, instead of just assessing their potential importance. Engage expert statistical reviewers where necessary, pay them if you must. Be immediately responsive to criticism, and enforce this same standard on authors. The alternative is more retractions, more missteps, more wasted time, more loss of public trust … and more death.

The Lancet has made one of the biggest retractions in modern history. How could this happen?

Lancet Formally Retracts Fake Hydroxychloroquine Study Used By Media To Attack Trump

Is the Lancet complicit in research fraud?

“The Lancet has become a laughing stock”

Would Lancet and NEJM retractions happen if not for COVID-19 and chloroquine?

Coronavirus prophet Richard Horton is at it again

Corrupt gateways to a corrupt system

Journals are not the only gateway to the peer review and publication system. Preprint servers are research publication repositories that evaluate on a limited basis, and accept or reject articles, manuscripts etc that have yet to enter the full blown, formal peer review process.

A preprint, also known as the Author’s Original Manuscript (AOM), is the version of your article before you have submitted it to a journal for peer review.

What is a preprint server?

Preprint servers are online repositories which enable you to post this early version of your paper online.

In some academic disciplines preprint servers are now commonly used. Among the most well known are:

ArXiv (physical sciences)

SocArXiv (social sciences)

bioRxiv (biology)

There are, however, equivalents for most research areas.

Preprint servers are an opportunity to get your work out to your peers quickly. Although readers need to keep in mind that preprints will not have been through a formal peer review process.

What are the benefits of preprints?

Speed

Your manuscript can become available for others to read before the final version of it is published. As publication times can sometimes be lengthy, this gives other researchers a chance to see your work a lot quicker.

Authorship

The preprint is a public record that you published that research at that time. Your work will likely be assigned a digital object identifier (DOI).

Review

Posting your preprint allows other researchers to offer feedback that may help to improve your article before the more formal journal peer review process.

Research impact

The research presented in your preprint will be publicly available for other researchers or practitioners to cite and build upon more quickly.

One might first submit to a preprint server where it can be viewed by the scientific community and critiqued, ahead of or at the same time as being submitted to a journal for formal peer review. However, journals generally forbid the duplicate submission of a paper to more than one journal at a time, forcing publication assessment into a linear, one-at-a-time process. Part of the reason being that there’s a possibility of duplicated work in the peer review process, although this doesn’t stand up to logic. In actual fact, the more people who peer review, the better.

Preprint doesn’t guarantee significant exposure or peer review. It is a platform for both possibilities, but it still forms a gateway to exposure, awareness and publication because preprint servers can and will reject papers without necessarily explaining why. This opacity is rife for corruption.

But what if your work is rejected by the preprint servers and by any and all meaningful journals? Traditionally, you’re stuffed.

The Peer Review Process

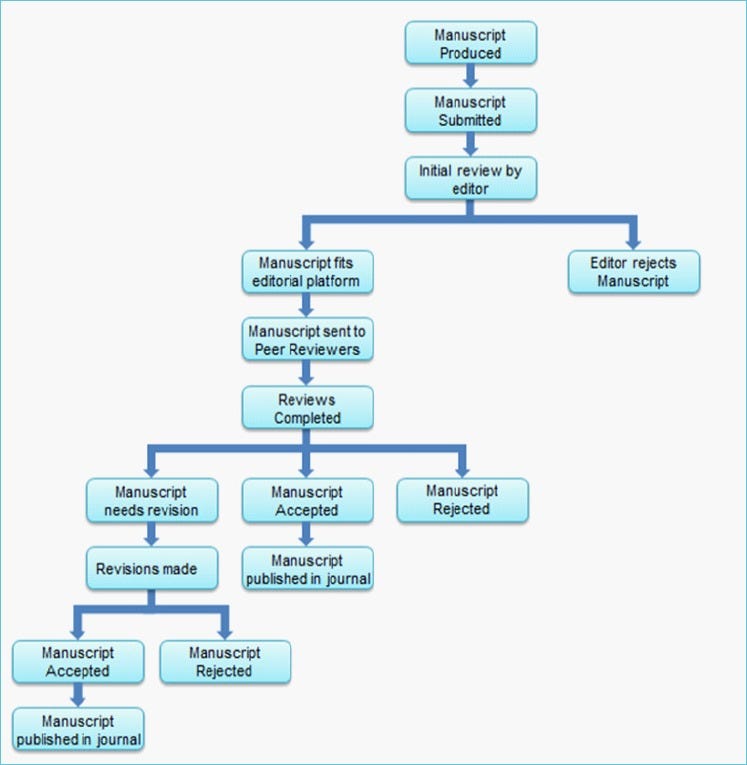

NIH’s article Peer Review in Scientific Publications: Benefits, Critiques, & A Survval Guide provides an overview of the model peer review process, including some critiques of the process. Two flow diagrams provide a visual outline of the entire journal publication process in which peer review is enshrined and the high level view of what an individual reviewer does.

Before we briefly consider the frankly profound and long standing critiques of the peer review process, consider this: literally nowhere in the NIH’s article does it state that any reviewer gains access to raw data and analyses or assesses that data against what the author is presenting in their paper. By implication, NIH is saying that reviewers only concern themselves with the paper as it is presented, which includes a data set. Therefore, if the data is fraudulent or manipulated, the reviewer may stand literally zero chance of identifying those issues because they will only be looking for inconsistencies or clues within a treated and presented data set and methodology. This is key and has been shown to be a fundamental fraud in COVID and glyphosate science, yet it is not mentioned by the NIH.

Considering that most if not all reviewers are unpaid one must consider why people would do it. Some of the answers are given by the NIH and they are not great answers that hinge largely around forms of explicit or implicit advantage, either socially, technically or commercially. Being paid nothing also means that bribery has a floor value of zero.

NIH’s key critiques of peer review

There is little evidence that the process actually works, that it is actually an effective screen for good quality scientific work, and that it actually improves the quality of scientific literature. As a 2002 study published in the Journal of the American Medical Association concluded, ‘Editorial peer review, although widely used, is largely untested and its effects are uncertain’ (25). Critics also argue that peer review is not effective at detecting errors. Highlighting this point, an experiment by Godlee et al. published in the British Medical Journal (BMJ) inserted eight deliberate errors into a paper that was nearly ready for publication, and then sent the paper to 420 potential reviewers (7). Of the 420 reviewers that received the paper, 221 (53%) responded, the average number of errors spotted by reviewers was two, no reviewer spotted more than five errors, and 35 reviewers (16%) did not spot any.

Peer review is not conducted thoroughly by scientific conferences with the goal of obtaining large numbers of submitted papers. Such conferences often accept any paper sent in, regardless of its credibility or the prevalence of errors, because the more papers they accept, the more money they can make from author registration fees (26). This misconduct was exposed in 2014 by three MIT graduate students by the names of Jeremy Stribling, Dan Aguayo and Maxwell Krohn, who developed a simple computer program called SCIgen that generates nonsense papers and presents them as scientific papers (26).

Peer review is often criticized for being unable to accurately detect plagiarism. However, many believe that detecting plagiarism cannot practically be included as a component of peer review. As explained by Alice Tuff, development manager at Sense About Science, ‘The vast majority of authors and reviewers think peer review should detect plagiarism (81%) but only a minority (38%) think it is capable. The academic time involved in detecting plagiarism through peer review would cause the system to grind to a halt’ (27). Publishing house Elsevier began developing electronic plagiarism tools with the help of journal editors in 2009 to help improve this issue (27).

Peer review has lowered research quality by limiting creativity amongst researchers. Proponents of this view claim that peer review has repressed scientists from pursuing innovative research ideas and bold research questions that have the potential to make major advances and paradigm shifts in the field, as they believe that this work will likely be rejected by their peers upon review (28). Indeed, in some cases peer review may result in rejection of innovative research, as some studies may not seem particularly strong initially, yet may be capable of yielding very interesting and useful developments when examined under different circumstances, or in the light of new information (28). Scientists that do not believe in peer review argue that the process stifles the development of ingenious ideas, and thus the release of fresh knowledge and new developments into the scientific community.

There are a limited number of people that are competent to conduct peer review compared to the vast number of papers that need reviewing. An enormous number of papers published (1.3 million papers in 23,750 journals in 2006), but the number of competent peer reviewers available could not have reviewed them all (29). Thus, people who lack the required expertise to analyze the quality of a research paper are conducting reviews, and weak papers are being accepted as a result.

Peer review is also criticized for being a delay to the dissemination of new knowledge into the scientific community, and as an unpaid-activity that takes scientists’ time away from activities that they would otherwise prioritize, such as research and teaching, for which they are paid (31).

[Regarding reviewer anonymity] The main disadvantage of reviewer anonymity, however, is that reviewers who receive manuscripts on subjects similar to their own research may be tempted to delay completing the review in order to publish their own data first (2).

When read fully the NIH article provides a basic framework for personal, corporate, institutional and journal corruption to the extent that a motivated and resourced actor could easily map out all the points in the peer review process that they would need to control in order to effectively stitch up science, apart from withholding and faking their own data in a way that the peer review process could never detect. From a lay perspective and with the hindsight of COVID, process is utterly laughable. Here is a simplistic re-framing of the process from a cynical point of view:

I conduct or commission biased or even fake private research and employ appropriate legal protections to exercise privacy, ownership and control over the data and as much of the research as possible. I may employ a ghostwriter, meaning that the named authors aren’t even writing the research and may have never even conducted it.

I find appropriate journal(s) that balance prestige, coverage and field expertise. If there is a way for me to lobby or influence the journal and its editor (funding, entertaining, access, bribery etc) I do that. If I can find out who their reviewers are I try to influence, co-opt or even bribe them as early in the process as is appropriate, including possibly involving them in future research and giving them first or high order authorship (irrespective of whether they do any real research for me).

Provided that my bent research is written well enough to be accepted by the journal’s editor (whether I got to them or not), it can then enter the journal’s peer review process (whether I got to the reviewers or not).

Even if the research contains errors, there are good odds that all or many will not be detected. The nature of those errors and the reviewers’ (in)ability to detect them still doesn’t necessarily expose the use of underlying fraudulent primary data, to which the reviewer won’t have access. If errors are detected, I stand the chance of being able to “correct” them appropriately and still get published.

If I can subvert the process in any way, my chances of getting bent research published in a prestigious journal goes up and I stand to reap the downstream rewards.

I now repeat the above with different authors to make it look like separate research that overlaps with the other, whose findings reinforce the originals. In doing this over and over, I can create fake scientific dogma.

What has just been described above is largely what Monsanto did for decades of research into glyphosate, and it got away with it to the point that glyphosate is the most widely used herbicide on the planet and its use and monitoring within the end-to-end food chain is largely ignored. There is no formal testing for the presence of glyphosate in human food, despite the compound being sprayed on food in the growing and processing phases and it now being recognised as toxic and carcinogenic. This state of affairs has arisen because Monsanto took advantage of a system that is corruptible and then set about corrupting it and the people within it on a monumental scale. Rest assured, this is what has happened in COVID to the point that it is a witting and unwitting fraud on a global scale, the likes of which may not have been seen before.

Implied trust is utterly worthless

What peer review and its proven corruptibility demonstrates is that the implied trustworthiness it confers is literally worthless. Lay people who have no understanding of the process will often reject preprints because they are not peer reviewed, despite the fact that they have no greater ability to discern the validity of a peer reviewed paper.

What peer review could be said to come down to is the evaluation of whether a submitted paper makes sense within its own frame of reference and context, against a framework of logic and pre-existing knowledge. This leaves at least two gaping holes unaddressed. For “new” or innovative work, reviewers may have no ability to evaluate the scientific truth of the work and its claims because it is new. Without access to raw data there is potentially no means whatsoever to detect certain kinds of data fraud using a system that is not proven to actually work in the first place and has been repeatedly shown to be corruptible and inadequate. This is to say nothing of the downstream snowball effect of the consequences of the peer review process and its failings.

Good science is about reproducibility and proof

Nothing in peer review attempts to replicate the research and reproduce its results. Yet reproducibility is fundamental to the scientific method. There is an obvious tension between verification or validation and providing a third party with the means to completely reproduce a scientific process that one wishes to protect for commercial reasons. That’s not to say that there are not ways around this problem. Also, many aspects of scientific or academic publishing are not concerned with this problem at all (see Fenton & Neil, below).

Corrupt publication enables scientific and academic suppression

If it is not possible to publish one’s work, its visibility suffers and so to does its validation. This is a major problem in general, but an even bigger problem for those who rock the boat. The establishment has stitched up the process and thereby the access to the market of science, academia and ideas.

A current but ongoing example of this is provided by Professors Norman Fenton and Martin Neil et al of Queen Mary’s University London. The authors of “Where are the Numbers” substack have been doggedly examining key data sets through the COVID “pandemic” and have repeatedly unearthed governmental and institutional data fraud and manipulation that has been actively employed in the COVID narrative to drive both public perception and action, globally. Needless to say, they have never been the establishment’s flavour of the month.

They have been hitting a key publication problem in the preprint server gateways. As explained in the article linked below, academic preprint servers are stonewalling their submitted papers that challenge aspects of the COVID narrative. These rejections are both opaque and likely systematic. Every non-COVID paper they have submitted has been accepted. Bear in mind that a preprint server does not conduct peer review. The servers simply act as repositories for yet-to-be-reviewed and published work.

Fenton & Neil’s work is entirely reproducible and has no proprietary protections on the underlying data. The methods are forms of mathematical analysis which are largely explained and the data is freely available in the public domain. This kind of academic analysis is perfectly verifiable and is in the public interest. For the work to be rejected by a preprint server stinks to high heaven and suggests that the servers are touched and wilfully engaging in suppression in support of the British government and wider COVID narrative.

Fixing both problems

There are two problems here:

Preprint publication

Peer review process and publication

Setting up a pre-print server is easy

The first problem is easily fixed.

A preprint server is just a box holding copies of papers that people know how to find. Anyone could run a preprint server.

All that is required is a that a competing server is set up in an appropriate legal domain with the minimally acceptable terms of service, which could a largely hinge around a set of format rules, author declarations and a big “caveat emptor” that people are reading things that have not been peer reviewed or reproduced. Server costs crudely hinge around the amount of data served per month, costs for any management or maintenance of what’s on the server, and liabilities stemming from server operation or content. It would be very straightforward to rent a cheap server for dollars per month, set up a specific interface for submission and publication, then let people begin uploading. These costs would initially be so low that they could be paid for out of pocket by the person setting up the server. Literally hundreds of dollars or less while traffic is low. The users of the server could make voluntary donations to fund it and those whose work was published could pay a small fee to cover time and hosting. All straightforward. Some money would likely need to be spent on some form of publicity to advertise the servers existence but these need not be high. As awareness grew, public donations could be solicited. Likely the costs would be easily recouped provided that the server was a broad church.

Peer review process, journals and publication is big and complex

The second problem is more complicated, larger and difficult, but people are biting off chunks of the problem.

Genomics expert, Kevin McKernan, has used substack as a totally free preprint server where he has published multiple technical articles on his work into the analysis of COVID gene therapy vial contents. That’s one way of dealing with the above problem that’s adequate for his needs and could be made workable on a larger and broader scale as well, subject to the whims of substack management and forces that they are subject to.

McKernan’s preprints are reproducible and that’s the point. Since he published them, multiple parties have now reproduced the results and thereby experimentally validated them at levels deeper than peer review would achieve alone. The entire experimental process has been validated, more data acquired and compared back to his raw data. The very act of scientific reproduction trumps peer review while at the same time serving to literally prove or disprove McKernan’s findings. This is exactly what scientists should want any analysis of their work to achieve. In designing and publishing his work like this, McKernan set out to make his work higher in quality and lower in cost through the act of publication. He used existing and new analysis protocols that others have validated and proven as practical and cost effective.

If McKernan combines all of his work with that of his colleagues engaged in review and reproduction, he will have exceeded the level of publishing quality he could have gotten from The Lancet or the NEJM, because none of their reviewers would have actually done any work reproducing McKernan’s experiments.

It costs little to publish content on the internet and there are plenty of ways to publish for literally zero. This means a new publication has no traditional print media costs at all and all that it would need to recoup are server and human time costs for managing the content and the processes in a given field of expertise. If those costs are low, the price of publishing one’s work can drop to radically undercut existing journals who trade on name but have flawed and corrupted processes and a legacy of fake science publication that was never reproduced.

Blockchain for publishing

Now, strap all that onto a blockchain and call it McKernan Genomics Monthly and hey presto, you’ve got a new scientific journal that:

is built around a practically unhackable, immutable encrypted ledger that tracks vast historical properties of publications submitted to it and what was done to the publications, by whom and when;

stands for and encourages actual scientific reproduction as a baked in part of its review and publication process, where possible and appropriate;

provides greater transparency by declaring and tracking persons and interests involved in the research’s validation, review, reproduction and publication;

could be transparently run and funded and held accountable to records held and publicly accessible on its blockchain.

McKernan is also involved in using blockchain technology as a tool for scientific publishing. This is not a new concept and was discussed by Assange et al over ten years ago as a way to improve truthiness and integrity in publishing of information.

A Framework Proposal for Blockchain-Based Scientific Publishing Using Shared Governance is an article discussing how blockchain technology can be used to address or improve some but not all of the shortcomings found in the present publishing process.

Despite increases in the number and options to conduct scholarly publishing, concerns regarding peer-review quality, plagiarism, absence of community and patient engagement, publication bias, predatory publishing, the cost of OA publishing, and the opaqueness of the “pedigree” of scientific research are ongoing challenges (Rawat and Meena, 2014; Shamseer et al., 2017). Broader issues of academic integrity, reproducibility, and preventing data falsification and fraud are also at the forefront of debate focused on ensuring that public trust is maintained in the scientific process (Nurunnabi and Hossain, 2019). Many of these issues originate from macro issues associated with the academy and scientific research, including power relations among researchers, pressure to publish for academic job placement and career advancement, and the potential influence of conflicts of interest in the publication process (Young, 2009; Marcovitch et al., 2010).

One technology that has the potential to address some but not all of these challenges is blockchain, a distributed ledger technology (DLT) with use cases across several industries (e.g., financial services, healthcare, supply chain, energy, education, etc.) (Mengelkamp et al., 2017; Treleaven et al., 2017; Chen et al., 2018; Kim and Laskowski, 2018; Mackey et al., 2019). At its core, blockchain is a decentralized, peer-to-peer network that creates an immutable, chronological record of assets with transactions that can be on a public, private, or consortium-based blockchain (Mackey et al., 2019). Each “block” of data contains a “hash,” which is a unique identifier of the block that can link to the hash of the preceding block—thus creating a cryptographically linked chain of data (Zheng et al., 2017; Mackey et al., 2019). This “block” of “chained” data can be distributed across blockchain nodes or participants and is subject to “consensus” of the network. A blockchain solution can also have many feature layers that operate processes on the blockchain network by interacting with other data architecture, including cryptocurrencies and tokens, distributed applications (DApps), and smart contract.

I’m not a scientist. Why should I care, let alone fund and support?

You and everyone else on the planet is a direct consumer of science and academia, be it fake or real. You have the biggest vested interest in eradicating fraud and bullshit from both as a means to check egregious abuses of power. The entire COVID head fake was built on this corruption and the trusting ignorance of not just the public but professionals and “experts” as well. This toxic environment has enabled all manner of bad outcomes culminating in massive deaths and damage e.g. thalidomide, COVID gene therapies, across a range of fields.

What is next in the pipe is the explosion of genetic engineering experiments conducted directly on the human race with practically no meaningful safeguards, oversight, review or right to redress. COVID is exactly this and there is exponentially more where that dog shit came from.

You can ignore it all and tell yourself it’s someone else’s problem, or that others are fixing the problem. You’d be lying to yourself. This is your problem and your kids’ problem. It’s everyone’s problem. Just because there are people like McKernan around, they are in an ultra micro minority and in no way can compete with the establishment on a timeframe and scale that will stop what’s coming. The only way to rebalance power is to effectively crowdsource the resistance and the counterbalancing solutions, and actively build the meaningful tools and knowledge of the resistance.

Knowing about fighting isn’t enough. Knowing how to fight isn’t enough. Knowing fighters isn’t enough. Being educated, trained, organised and connected with/into an effective fighting unit who actually fights is everything.

The destruction of the corrupt scientific and academic publishing establishment is a fight worth having because from that all state and corporate implementation and mandates flow. If you don’t want to be made to eat mRNA injected meat, vegetables and processed food, you need to find uncorrupted and trustworthy ways that inarguably prove, one way or another that the science behind such schemes is safe or otherwise. The present peer review publication process clearly isn’t fit for this purpose and neither is the regulatory system that is built on top of it.

This is a huge but doable exercise that has many moving parts. One of the fundamental parts is explaining to as wide an audience as possible just how, why and to what extent the field of peer review and scientific publishing is corrupt, and what that corruption drives. Then one must spell out key steps to fix it and provide calls to action that the average Joe can get behind with the ability to vet, test and keep tabs on the people doing the work. With that done, some form of crowdsourcing and crowdfunding can be begin for major projects to create the future alternatives. Once built, people will come. Acceptance in the dogmatic fields of science will take time and there will be major fights and attacks along the way, but it is worthy work if you believe that a positive future for humanity depends upon good science and its applications.

David Healy is a psychiatrist, scientist, psychopharmacologist, and author.

Before becoming a professor of Psychiatry in Wales, and more recently in the Department of Family Medicine at McMaster University in Canada, he studied medicine in Dublin, and at Cambridge University. He is a former Secretary of the British Association for Psychopharmacology, and has authored more than 220 peer-reviewed articles, 300 other pieces, and 25 books, including The Antidepressant Era and The Creation of Psychopharmacology from Harvard University Press, The Psychopharmacologists Volumes 1-3 and Let Them Eat Prozac from New York University Press, and Mania from Johns Hopkins University Press and Pharmageddon.

The latest and most important book is Shipwreck of the Singular. Healthcare’s Castaways. This documents how improvements in medicine which contributed to increasing our life expectancies have now turned inside out and are leading to shortened life spans. At the same time the climate of healthcare has turned toxic with increasingly fraught encounters between staff and management and between patients and services who are more concerned to manage risks to them rather than to us.

David’s main areas of research are clinical trials in psychopharmacology, the history of psychopharmacology, and the impact of both trials and psychotropic drugs on our culture.

He has been involved as an expert witness in homicide and suicide trials involving psychotropic drugs, and in bringing problems with these drugs to the attention of American and European regulators, as well raising awareness of how pharmaceutical companies sell drugs by marketing diseases and co-opting academic opinion-leaders, ghost-writing their articles.

David is a founder and CEO of Data Based Medicine Limited, which operates through its website RxISK.org, dedicated to making medicines safer through online direct patient reporting of drug side effects.

David and his colleagues recently established RxISK eConsult, an online medication consultation service to answer the question “Could it be my meds?”

Click here for a more detailed curriculum vitae.

As a layperson I’m in for crowd sourcing blockchain

I would like to see another nation bring this before the ICC. No western nation will have the guts from what I see. Africa, India, you know, the rising BRICS nations. It would go further in exposing them and the UN for the global West puppet they are.

Great article. Thank-you.