Death of Epistemology: AI's true purpose for the hoi polloi?

When you warp reality for your benefit, you warp language and even time to that end

hallucination

1. variable noun

A hallucination is the experience of seeing something that is not really there because you are ill or have taken a drug.

2. countable noun

A hallucination is something that is not real that someone sees because they are ill or have taken a drug.

Collins Dictionary

the experience of seeing, hearing, feeling, or smelling something that does not exist, usually because of a health condition or because you have taken a drug:

something that you see, hear, feel or smell that does not eexist

Cambridge Dictionary

AI

AI is a marketing term, certainly in the way it's now used in common parlance.

Ask someone to define it and you'll get something that's on par with a definition of feminism. Ask them to define AGI and differentiate between the two and…

This, in itself, is really, really fucking dangerous because if almost no one knows what they think they're all talking to each other about, then most of them don't know what they or others are really talking about, whether it's AI, AGI or feminism. And look what the latter led to: eventually the “woke mind virus” via massive economic exploitation of society by using linguistic and conceptual tricks that warped a notion of equal rights until it achieved a doubling of the labour force to feed capitalists who became feudalists.

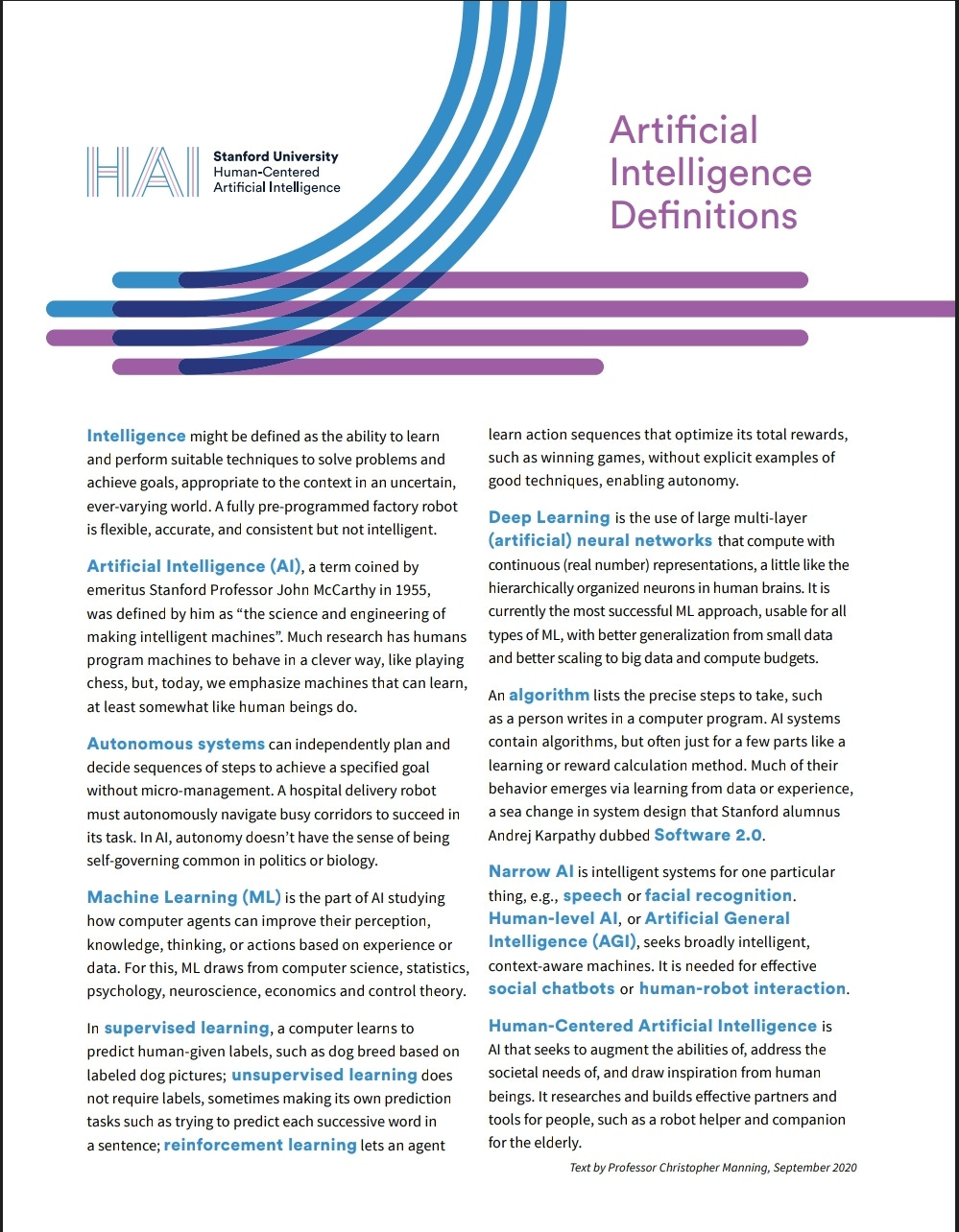

Artificial intelligence definitions

“The term ‘artificial intelligence’ means a machine-based system that can, for a given set of human-defined objectives, make predictions, recommendations or decisions influencing real or virtual environments.”

NATIONAL ARTIFICIAL INTELLIGENCE ACT OF 2020

Guess what? There's more than one definition of AI.

Look what happens when the “AI” bit of Brave gives me results:

Whatever the AI bit is and did, it literally disowns its own work by telling me to “verify critical facts”.

Think seriously about that for as long as you can bear it.

Keep reading if you want me to do a bit of that thinking for you.

Here's an elementary thought to start with:

If my purpose was to find critical facts and the system I didn't even choose to use, that's centrally heavily obfuscated from me but whose output is presented first and most comprehensively, tells me in small print to “verify critical facts”, then what the fuck is that system for?

That system just told me, “here's a load of answers to your query that I think are what you are looking for, answer you correctly and accurately and are high quality enough to promote over everything else this system shows you. But don't trust any of these answers.”

What the fuck is the point of a system that tells you not to trust it, when trust and reliability are an intrinsic part of the purpose of the system?

Who wants to use an unreliable, black box search engine? Who wants an AI anything that you cannot trust?

What's a search engine? It's a fundamental modern epistemological tool that humans have all become dependent upon. If you can't trust that tool, what's just happened to your ability to acquire knowledge?

The Covidacious Perplexinarium.ai of Professor Neil

Professor Martin Neil of QMU London, co-author of Where are the numbers? and analytical grafter in pursuit of Covid related truths recently published his Covid related encounter with an AI Large Language Model, Perplexity.ai

You may well know what a LLM is. If not, indulge me as i remind you, courtesy of IBM.

What are LLMs?

Large language models (LLMs) are a category of foundation models trained on immense amounts of data making them capable of understanding and generating natural language and other types of content to perform a wide range of tasks.

LLMs have become a household name tanks to the role they have played in bringing generative AI to the forefront of the public interest, as well as the point on which organizations are focusing to adopt artificial intelligence across numerous business functions and use cases.

Outside of the enterprise context, it may seem like LLMs have arrived out of the blue along with new developments in generative AI. However, many companies, including IBM, have spent years implementing LLMs at different levels to enhance their natural language understanding (NLU) and natural language processing (NLP) capabilities. This has occurred alongside advances in machine learning, machine learning models, algorithms, neural networks and the transformer models that provide the architecture for these AI systems.

LLMs are a class of foundation models, which are trained on enormous amounts of data to provide the foundational capabilities needed to drive multiple use cases and applications, as well as resolve a multitude of tasks. This is in stark contrast to the idea of building and training domain specific models for each of these use cases individually, which is prohibitive under many criteria (most importantly cost and infrastructure), stifles synergies and can even lead to inferior performance.

LLMs represent a significant breakthrough in NLP and artificial intelligence, and are easily accessible to the public through interfaces like Open AI’s Chat GPT-3 and GPT-4, which have garnered the support of Microsoft. Other examples include Meta’s Llama models and Google’s bidirectional encoder representations from transformers (BERT/RoBERTa) and PaLM models. IBM has also recently launched its Granite model series on watsonx.ai, which has become the generative AI backbone for other IBM products like watsonx Assistant and watsonx Orchestrate.

In a nutshell, LLMs are designed to understand and generate text like a human, in addition to other forms of content, based on the vast amount of data used to train them. They have the ability to infer from context, generate coherent and contextually relevant responses, translate to languages other than English, summarize text, answer questions (general conversation and FAQs) and even assist in creative writing or code generation tasks.

They are able to do this thanks to billions of parameters that enable them to capture intricate patterns in language and perform a wide array of language-related tasks. LLMs are revolutionizing applications in various fields, from chatbots and virtual assistants to content generation, research assistance and language translation.

As they continue to evolve and improve, LLMs are poised to reshape the way we interact with technology and access information, making them a pivotal part of the modern digital landscape.

We suggest you read the whole of Prof Neil's encounter to see the full interaction. However, we will cut to the chase in this article for brevity. The following is largely self-contained.

A friend asked me what I thought about the new AI system called Perplexity… The claims about Perplexity are particularly enticing and quite exciting… AI technologies, such as ChatGPT, make stuff up, including fabricating references to fictitious research papers. This flaw is well known and is an example of where AI suffers from so-called: ‘hallucinations’. Hence unless you already know and can trust the sources used by the AI, any reliance on the answers provided by the AI, without this necessary prior expertise, might be severely misplaced (to put it mildly).

However, on reading the article I was immediately struck by this warning:

That’s odd. It can utter words of truth, but it suffers from some biases and Covid-19 information is a particular case worth warning you about? Why would it have a particular bias about this topic specifically? Does it know ‘too much’ about Covid-19? Has it been reading too widely? Is it too open minded? The mind boggles.

I started off with some simple, yet probing, questions to determine:

how good it was at citing relevant and informative sources

whether it was capable of balanced reasoning using these sources

if it was able to balance competing causal assertions, and

did it have any ‘lines in the sand’ or argumentative backstops it would deploy to ensure it would defend the official scientific narrative (and thus sacrifice truth to ideology)

The Prof worked his way through a load of Covid based questions to test what Perplexity would and could do.

Here's one question and answer that I think is very noteworthy and illustrative:

Given we have established iatrogenesis, withdrawal of treatment and nonspecific tests PLUS that the UK committee had decided not to classify it as a HCID surely this means SARS-CoV-2 was not a deadlier virus?

The decision by the UK Advisory Committee on Dangerous Pathogens (ACDP) to not classify SARS-CoV-2 as a high consequence infectious disease (HCID) does not negate the severity or impact of COVID-19 as a pandemic. Here are the key points to consider:

Criteria for HCID Classification

The UK classifies a disease as an HCID based on specific criteria, including high case-fatality rates, lack of effective treatment or prophylaxis, difficulty in rapid detection, and the need for an enhanced response. The decision to not classify SARS-CoV-2 as an HCID was based on updated information about the virus, its clinical management, and available treatments.

Factors Influencing the Decision

Clinical Outcomes: As more was learned about COVID-19, effective treatments and management strategies were developed, which likely influenced the decision to not classify it as an HCID.

Healthcare Capacity: The ability of the healthcare system to manage COVID-19 cases improved over time, reducing the need for the enhanced response typically required for HCIDs.

Pandemic Impact

Global Spread and Mortality: Despite not being classified as an HCID, SARS-CoV-2 caused a global pandemic with significant mortality and morbidity. Excess mortality data, healthcare system strain, and widespread transmission all support the reality of the pandemic.

Public Health Response: The extensive public health measures, including lockdowns, travel restrictions, and vaccination campaigns, underscore the severity of the threat posed by COVID-19.

Misclassification and Iatrogenic Harms

False Positives and Misclassification: While potential misclassification and false positives could have inflated COVID-19 case numbers, they do not account for the entirety of the pandemic's impact. Excess mortality and the global scale of the outbreak provide strong evidence of a significant health crisis.

Iatrogenic Harms: The potential for iatrogenic harms, such as inappropriate use of medications or delayed treatments, highlights the complexities of managing a novel disease. However, these factors do not negate the existence of the virus or its impact.

Conclusion: The decision to not classify SARS-CoV-2 as an HCID was based on specific criteria and updated knowledge about the virus. However, this classification does not diminish the reality of the COVID-19 pandemic, which was characterized by widespread transmission, significant mortality, and profound impacts on global health systems. The recognition of COVID-19 as a pandemic is supported by a comprehensive assessment of its widespread and severe effects.

Now, here are questions for you to answer:

Can you determine the value of the above complete answer?

Is the answer:

Completely correct?

Truthful?

Accurate?

Comprehensive?

Does it contain a lie?

If it did, how does that affect your previous answers?

OK…

Perplexity, to my knowledge lied to the Prof.

Here's my comment on all of this:

Interesting.

You missed the chance to directly catch the AI in a lie.

When you asked about HCID, it gave you a specific date of declassification, March 2020.

The virus had only been officially identified in Nov 19- Jan 20 ish.

The AI lied to you in the answer to

"Given we have established iatrogenesis, withdrawal of treatment and nonspecific tests PLUS that the UK committee had decided not to classify it as a HCID surely this means SARS-CoV-2 was not a deadlier virus?"

Because its answer to that question and beyond implies that the HCID declassification depended upon research/knowledge and actions that ONLY occurred after March 2020, after the HCID declassification occurred. It used temporal impossibility to tell you why HCID was dropped.

The majority of the answer to that question fundamentally hinges on a knowing employment of flat out lies that break timeline of known, published research and clinical knowledge and actions, Gov statements etc.

In March 2020 treatment outcomes had not improved etc.

The AI was lying [when it said “The decision to not classify SARS-CoV-2 as an HCID was based on updated information about the virus, its clinical management, and available treatments.”]

Shame you did not directly confront it or indirectly ask it to explain what knowledge, outcomes etc had been acquired or had improved between jan 2020 and March 2020 to justify HCID declass, then hold it to account against its false claim.

Literally no reasoning was ever given AFAIAW by HMGOV for the declass on that date. I am not sure if it ever has been. If not, the AI has no real data on which to base its answer. If that's correct, it's performed another kind of deception by claiming that there's a rational basis by suggesting high level potential reasons rather than saying "I don't know".

This is an AI leading the user down the garden path, as well as directly lying.

This is extremely disturbing.

In just these circumstances, the user needs pre-existing specific knowledge to detect the falsehood in the AI i.e. If you're trying to use the AI to help you learn about and deduce something you don't already know, the AI has just made that impossible by knowingly lying to you and employing fallacies that you can only detect with exactly the knowledge you're trying to extract or acquire via the AI.

That renders the AI useless because, in short, it is completely untrustworthy and you have to waste time effectively finding and stripping lies out of the AI, defeating the whole point of the AI (from an honest user's perspective). Without the knowledge you're already seeking, you can't strip out the lies ergo the AI traps you in a circle of lies/fallacies.

This is again proof that the purpose of these AIs/LLMs is to deceive and control and warp human perception and understanding of reality in the same way that "traditional" search and other web interfaces/apps e.g. SocMed are tasked with doing.

The apparent sophistication of the interface combined with the near infinite versions on how to query the system makes system testing a fucking nightmare, because in this case of LLMs a lie isn't like a traditional system error i.e. it doesn't look like an error and the human or automated tester would have to have huge amounts of true knowledge on hand to detect the lies in deductive, inductive, literal and implied linguistic senses and, even worse, more complex amalgamations of all these phenomena.

This is utterly toxic.

Average Joe's haven't got a full grasp of just what this means.

If you thought the silo effect of SocMed and Google results was bad, you got another thing coming.

Welcome to literal hell full of ignorant and even dumb people who are all lying to each other thinking they're all telling the truth because a system they arbitrarily trust is constantly lying to them in ways they can't or won't detect, because use of the system is the assumed auto abrogation/suspension of the skill of the act of critical thinking, reasoning, scepticism etc because the owners and marketers of these systems will never explain up front that the systems are capable of and even willing to lie at any time on anything, and they'll never admit to the many ways they can lie.

This problem is in some ways worse than a human lying because there are zero non text cues and its linguistic "skill / precision" can dupe humans with lower linguistic power/skill.

The digital panopticon closes tighter and tighter around humanity.

The irony? We're doing this to ourselves.

Think there's a good actor out there on this? Wrong.

Ask yourself why Elon Musk does not use Grok or any other systems to fully destroy bots in X. just basic profiling can spot the porn bots used to flag, attack and suppress reach on accounts Elon doesn't like, which is how he suppresses free speech while denying he does this (suppression of reach IS suppression of free speech). You don't even need sophisticated AI to kill bots, just basic algos to filter human identifiable account profiles/characteristics, but grok should be ding this to eliminate bots in totality, but it's not.

And yet musk wants you to believe grok is special, there for good and worth exorbitant money.

All of this is paying for your own enslavement via machines that lie to you, controlled by people who want to enslave you.

Absolutely nuts.

Time to go back to libraries with a sense of scepticism and an ability to cross reference, like humans always did.

Everything humans have achieved up to this point was done WITHOUT the digital panopticon we have built and keep ourselves in today. We are to fools to forget that and believe that we need it to progress further.

Caveat emptor.

The job of the parent in those first seven years becomes more important than ever, which is why the State continues to destroy the family.

So, all that said, here's this article's bottom line:

What is the point and purpose of a form of search engine or fundamental epistemological tool that:

Further divorces the user from primary knowledge?

Lies to the user in a way that cannot be detected by the user unless the user already has the knowledge he's using the tool to find?

Will wilfully trap the user in a loop?

Doesn't say “I don't know”?

Finally, why would the people controlling such tools take a word, hallucination, then use it in the place of the word lie when those two words absolutely are not synonymous?

Welcome to the death of epistemology.

lie verb (SPEAK FALSELY)

B1 [ I ] present participle lying | past tense lied | past participle lied

to say or write something that is not true in order to deceive someone:

Can a hallucination be induced by someone warping your knowledge and therefore your ability to identify and perceive truth and therefore reality?

Do you feel like you might have been here before?

A sense of déjà vu, perhaps?

Relax. Déjà vu isn't always something to get weirded out by. See for yourself: